The search landscape is shifting beneath your feet. While you have been optimizing for Google’s algorithm, your buyers have been asking ChatGPT for recommendations. ChatGPT reached 800 million weekly active users by late 2025, doubling from 400 million in just six months. Users now send approximately 2.5 billion prompts each day.

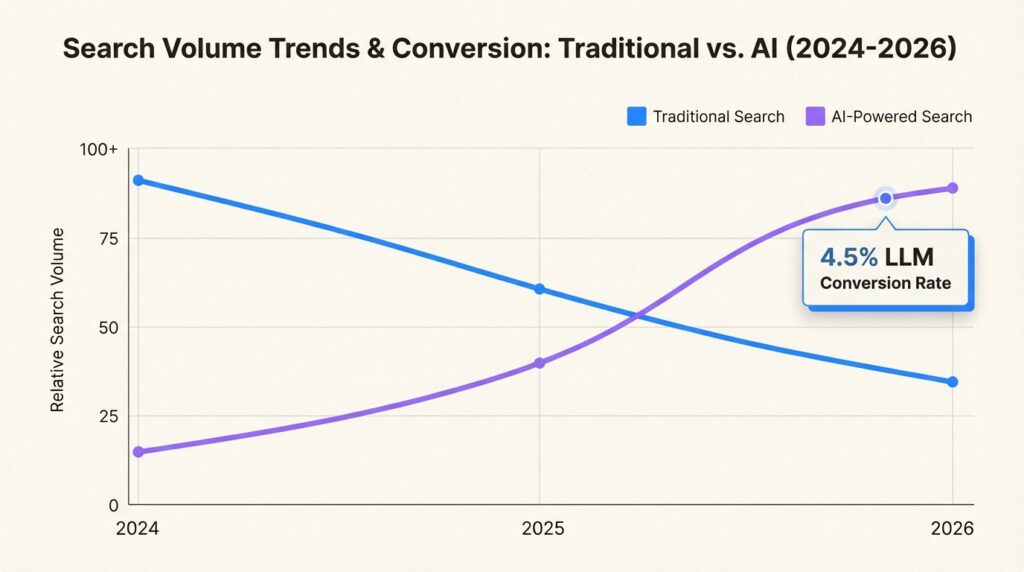

The impact on traditional search is already measurable. Gartner predicts a 25% drop in traditional search volume by 2026, and 73% of B2B websites experienced significant organic traffic loss between 2024 and 2025, with an average decline of 34% in SEO-driven visits.

But here is what makes this different from every other “SEO is dead” prediction you have heard: the traffic that is moving to AI search often converts better. Research from Knotch shows LLM conversion rates more than doubled from September 2024 to June 2025, while organic search conversions declined by 38%. Some businesses report LLM traffic converting above 4.5%, with 20-25% monthly growth in AI-referred visits.

The question is not whether to optimize for LLMs. It is whether you will figure out how to improve your AI content quality score for LLMs before your competitors do.

What Is an AI Content Quality Score?

An AI content quality score is a metric that assesses your content’s likelihood of being understood, cited, and recommended by large language models. Unlike traditional content scores that focus on keyword density and backlink profiles, AI content scoring evaluates how well your content serves as a source for LLM synthesis.

Traditional SEO asks: “Does this page rank for target keywords?” AI content quality asks: “Will ChatGPT or Perplexity cite this when answering user questions?” The difference is fundamental. Content with quotes, statistics, and links to credible data sources is mentioned 30-40% more often in LLMs compared to unoptimized content.

The business impact is significant. B2B buyers are adopting AI-powered search at three times the rate of consumers, with 90% of organizations using generative AI in some aspect of their purchasing process. AI-referred visitors spend up to three times longer on vendor sites than those from traditional search engines. They arrive with more context and higher intent.

At Decoding, we have built our AI Content Audit tool specifically to help businesses understand and improve their AI content quality scores. The audit inspects sitemaps to score content quality across pillars like authority, freshness, structure, and snippet extractability.

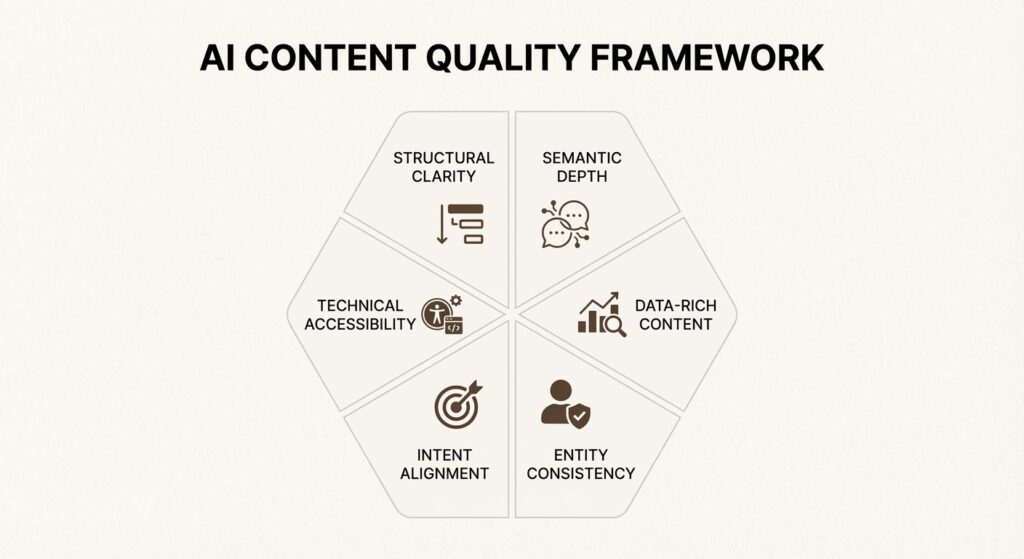

The 6 Pillars of AI Content Quality

Improving your AI content quality score requires a systematic approach across six key dimensions. Let’s break down each pillar and what it means for your content.

Pillar 1: Structural Clarity and Extractability

LLMs parse structured content more effectively than dense, unstructured text. The goal is to make your content machine-readable while remaining valuable to human readers.

Lead with the answer. Content with direct answers at the start of sections is more extractable and preferred by LLMs. Do not bury your key insight in paragraph three. State it immediately, then provide supporting context.

Use structural elements strategically:

- Numbered lists for processes and rankings

- Bullet points for features and benefits

- Tables for comparisons

- Clear H2/H3 hierarchy for topic organization

- Short paragraphs (2-4 sentences) for scanability

Implement schema markup. Pages with FAQ schema, How-to schema, and other structured data are more likely to appear in AI Overviews and LLM responses. This technical foundation helps LLMs understand the context and relationships within your content.

Pillar 2: Semantic Depth and Topical Authority

Surface-level content gets ignored by LLMs. You need to cover topics comprehensively, addressing related questions and subtopics that demonstrate expertise.

Include semantically related terms and concepts. If your content is about “project management software,” LLMs expect to see related terms like “task tracking,” “Gantt charts,” “team collaboration,” and “resource allocation.” This semantic richness signals topical authority.

Answer related questions within your content. The People Also Ask section in Google search results is a goldmine for understanding what questions LLMs need to answer. Incorporate these naturally into your content structure.

Demonstrate E-E-A-T: Experience, Expertise, Authoritativeness, and Trustworthiness. Include author bios, publication dates, and citations to credible sources. LLMs are trained to favor content that demonstrates clear expertise.

Pillar 3: Data-Rich, Specific Content

Generic claims get filtered out. Specific, data-rich content gets cited.

Replace vague language with specific metrics. Instead of “significant increase,” write “27% increase.” Instead of “improved performance,” write “reduced load time from 4.2 seconds to 1.8 seconds.” This specificity makes your content more valuable as a citation source.

Include original research, benchmarks, and case studies. SaaS companies that include specific metrics in their content see a 27% increase in LLM citations. Case studies influence 73% of purchases, with an average lead quality score of 8.7 out of 10.

Cite credible sources with links. When you reference industry statistics or research, link to the original source. This not only supports your claims but also trains LLMs to associate your content with authoritative sources.

Pillar 4: Entity Consistency Across Platforms

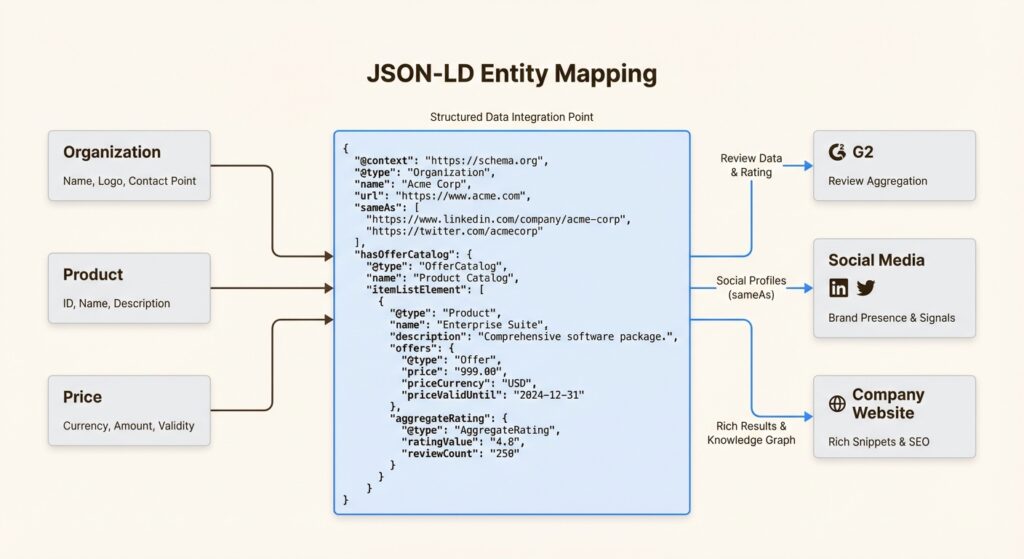

LLMs rely on consistent entity definitions to accurately represent brands and products. When your messaging varies across platforms, LLMs may produce inaccurate or confused responses.

Maintain consistent product names, pricing descriptions, and feature lists across your website, social media, and third-party directories. If your product is called “Pro Suite” on your website but “Professional Plan” on G2, LLMs may treat these as different offerings.

Use JSON-LD structured data to define entities clearly. Schema markup helps LLMs understand that “Acme Corp” is the organization, “Pro Suite” is the product, and “$99/month” is the pricing. This structured approach reduces ambiguity.

Pillar 5: Intent Alignment and Query Matching

AI search users ask longer, more complex queries. Searches with 4 or more words trigger Google AI Overviews 60% of the time, compared to shorter keyword-based queries. AI-powered search users ask queries averaging 15 to 23 words.

Think about what this means. Users are not typing “project management software.” They are asking “What project management tool works best for a 50-person remote team that needs to integrate with Slack and has a budget under $20 per user?”

Structure your content to answer these long-form queries. Include FAQ sections that address specific use cases, pricing scenarios, and integration questions. Match user intent whether it is informational, navigational, or transactional.

Pillar 6: Technical Accessibility

Even the best content cannot be cited if LLMs cannot access it. Technical fundamentals matter more than you might expect.

Fast page load impacts citation frequency. Pages that load faster get quoted up to three times more frequently by AI systems. Use tools like our Free AI Crawler to check your site’s technical health.

Ensure proper robots.txt configuration. Some websites inadvertently block AI crawlers while allowing Googlebot. Review your robots.txt to ensure AI bots from OpenAI, Anthropic, and Perplexity can access your content.

Maintain HTTPS, mobile optimization, and clean HTML structure. These technical signals indicate a well-maintained site that LLMs can trust as a source.

How to Implement the AI Content Quality Framework

Now that you understand the six pillars, here is how to put them into practice.

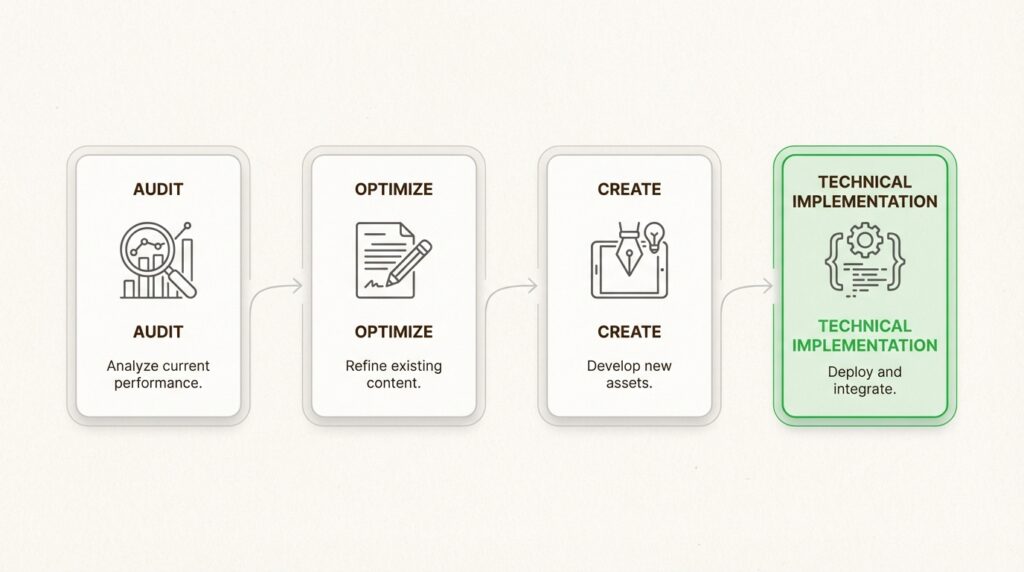

Step 1: Audit Your Current Content

Start by understanding where you stand. Use our Free AI Content Audit to baseline your current AI content quality scores. The audit scans your sitemap and evaluates content across the six pillars we have discussed.

Identify content with declining organic traffic. If you have seen traffic drops over the past year, those pages are prime candidates for AI quality optimization. Map your existing content to target LLM queries. What questions would someone ask that your content should answer?

Step 2: Apply the 6-Pillar Checklist to Existing Content

Work through your high-priority pages systematically. Restructure for extractability by moving key points to the beginning of sections. Add specific metrics and data points where you currently have vague claims. Implement schema markup for FAQs, how-tos, and articles.

Check entity consistency across your site. Do product names match exactly? Are pricing descriptions uniform? This consistency helps LLMs build accurate knowledge about your offerings.

Step 3: Create New Content with AI Quality Built-In

Research LLM-visible topics using AI search query patterns. What are people asking ChatGPT about your industry? Tools like our Query Fan-Out Detector can help identify these query patterns.

Outline with self-contained sections. Each section should be able to stand alone as a potential citation. Draft with specific data and citations included from the start. Review against the 6-pillar framework before publishing.

Step 4: Technical Implementation

Add JSON-LD structured data to your key pages. This markup helps LLMs understand the entities, relationships, and context of your content. Optimize Core Web Vitals to ensure fast loading times. Use our Free AI Crawler to verify AI bot accessibility and identify any technical barriers.

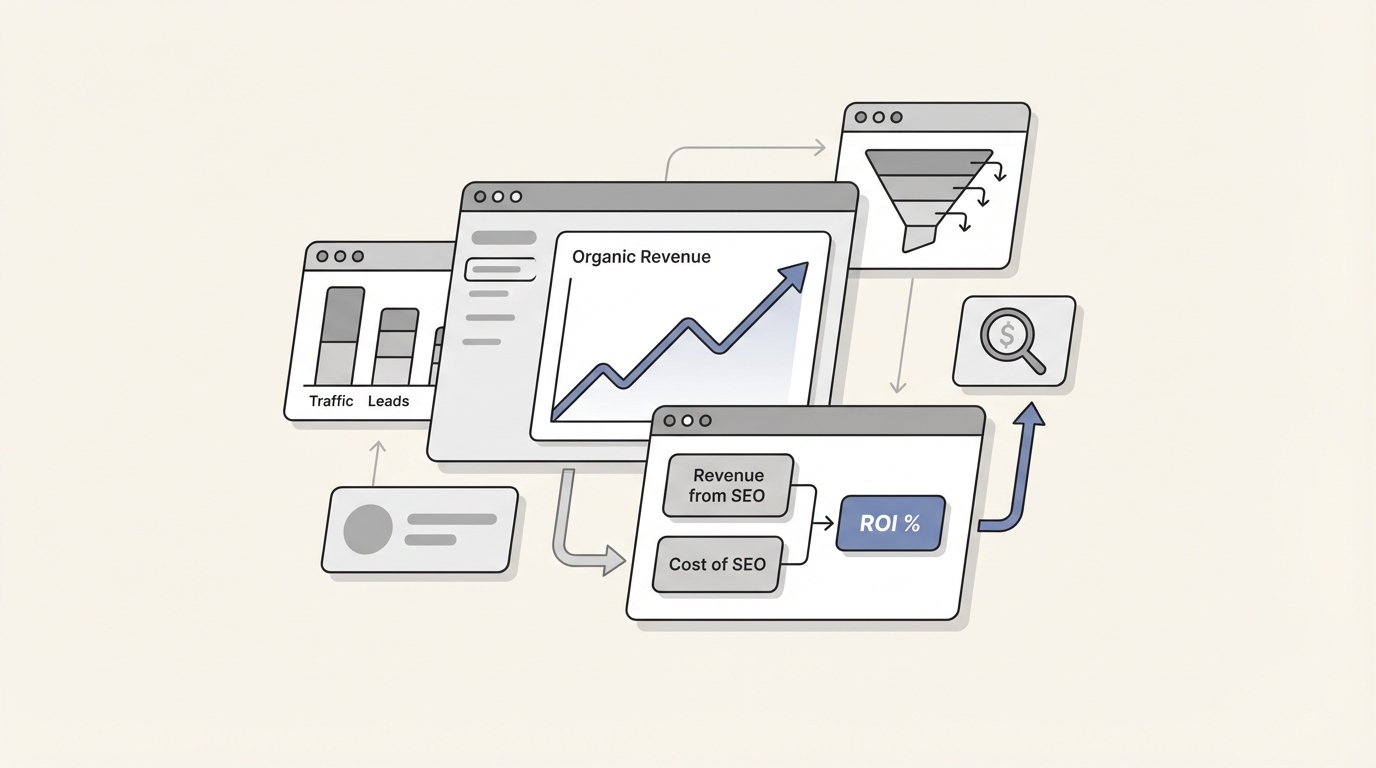

Measuring and Tracking Your AI Content Quality Score

Traditional analytics miss a significant portion of AI traffic. When AI Overviews are present, click-through rates drop to just 8%, compared to 15% for traditional search results. Your content might be influencing AI responses without generating any trackable traffic.

Here is how to measure your AI content quality effectively.

Tools for Measuring AI Visibility

Use a dedicated ChatGPT Visibility Tracker to monitor how often your brand appears in LLM responses. This tool scrapes AI search results to show you exactly when and how your content is being cited.

Conduct manual query testing across ChatGPT, Perplexity, and Claude. Run your target queries monthly and document whether your brand appears, how it is positioned, and what context is provided.

Analyze referral traffic from AI platforms. Use GA4 to track referral traffic from chatgpt.com, perplexity.ai, and other AI domains. While imperfect, this gives you a general sense of AI-driven traffic trends.

Key Metrics to Track

Monitor citation frequency in LLM responses. How often does your brand get mentioned for target queries? Track brand mention sentiment. Are LLMs citing you positively, negatively, or neutrally?

Measure AI-referred traffic growth. Even if the absolute numbers are small, growth rate matters. Track query coverage: how many of your target queries show your brand in AI responses?

Set up a monthly measurement cadence. AI search is evolving rapidly, so regular monitoring is essential. Our AI Visibility Audit can automate much of this tracking for you.

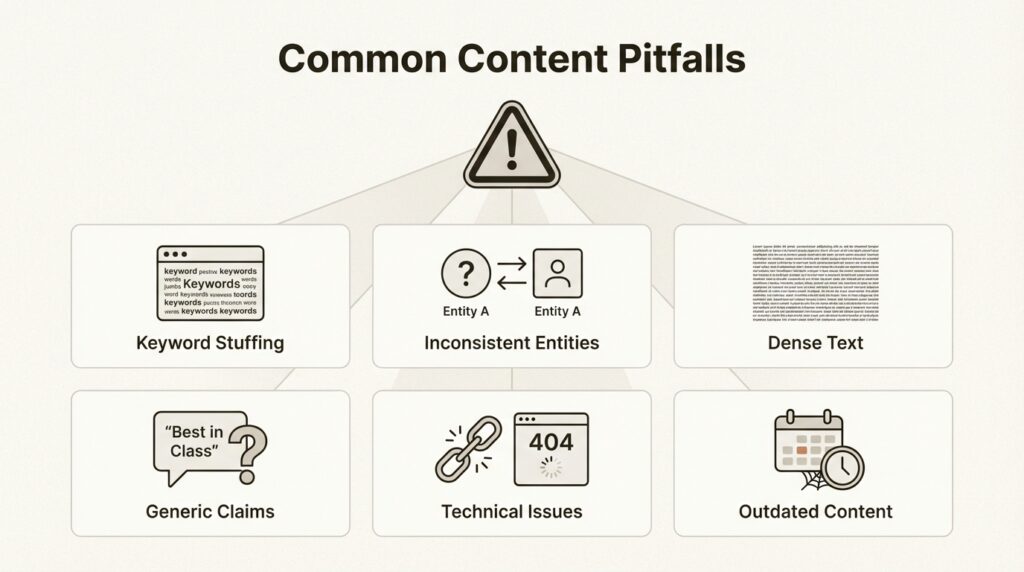

Common Mistakes That Lower Your AI Content Quality Score

Even experienced content teams make these errors when optimizing for LLMs.

Keyword stuffing hurts your AI content quality score. LLMs penalize unnatural language that reads like it was written for algorithms rather than humans. Write naturally and let semantic relevance emerge from comprehensive coverage.

Inconsistent entity definitions confuse LLMs. If your product name varies across platforms, LLMs may fragment their understanding of your offering. Audit your presence across your website, social profiles, and third-party directories.

Dense, unbroken text without structural markers is hard for LLMs to parse. Use headings, lists, and short paragraphs to create clear extraction points.

Generic content without specific data or insights gets filtered out. Every claim should be backed by specific metrics, examples, or citations.

Ignoring technical fundamentals creates invisible barriers. Slow load times and crawler blocks prevent LLMs from accessing your content at all.

Outdated content without freshness signals loses relevance. Include publication dates, “last updated” timestamps, and regular content refreshes.

Advanced Tips for Maximizing LLM Citations

Once you have mastered the fundamentals, these advanced strategies can accelerate your AI visibility.

Create comparison content. X versus Y comparisons perform exceptionally well because LLMs frequently need to provide users with alternatives when they are researching tools. These formats naturally include multiple entities and specific differentiators.

Publish original research and data studies. When you are the primary source for industry statistics, every LLM citation of that data points back to you. This creates a compounding visibility effect.

Build topical clusters with internal linking. Connect related content to demonstrate comprehensive coverage of a subject area. This cluster approach signals topical authority to LLMs.

Optimize for featured snippet-style extraction. Structure content with clear definitions, step-by-step processes, and concise answers that LLMs can extract directly.

For enterprise implementation, consider our AI and SEO services. We help organizations build systematic AI visibility programs that go beyond basic optimization.

Start Improving Your AI Content Quality Score Today

The shift to AI search is not coming. It is here. ChatGPT’s 800 million weekly users represent a fundamental change in how people discover information and make purchasing decisions.

The six-pillar framework gives you a systematic approach to improving your AI content quality score:

- Structure content for extractability with clear hierarchies and schema markup

- Build semantic depth and topical authority through comprehensive coverage

- Include specific data and metrics rather than vague claims

- Maintain entity consistency across all platforms

- Align with user intent and long-form query patterns

- Ensure technical accessibility for AI crawlers

Your immediate action items: audit your current content using our Free AI Content Audit, identify your highest-priority pages for optimization, and begin applying the 6-pillar framework systematically.

The businesses that master AI content quality now will capture disproportionate share of the growing AI-referred traffic. Those that wait risk becoming invisible in the channels where their buyers are increasingly active.

Ready to understand your current AI visibility? Get a free AI visibility audit and see exactly how your content performs across the six pillars. Or explore our guide to AI search optimization for more strategies on building visibility in the AI-first search era.

Frequently Asked Questions

How long does it take to see results after improving AI content quality for LLMs?

Results typically appear within 4-8 weeks as LLMs re-crawl and re-index your content. However, this varies by platform. ChatGPT’s browsing feature updates more frequently than its training data. Consistent publication of high-quality content accelerates visibility gains.

Can I use traditional SEO tools to measure AI content quality for LLMs?

Traditional SEO tools miss most AI traffic because LLM citations often do not generate clickable links. You need specialized AI visibility tracking tools like our ChatGPT Visibility Tracker to monitor brand mentions and citations in AI responses.

Does improving AI content quality for LLMs hurt my traditional SEO performance?

No. The strategies that improve AI content quality, structural clarity, semantic depth, and data-rich content, also benefit traditional SEO. Google increasingly uses similar signals to evaluate content quality. The two approaches are complementary.

How much does it cost to implement an AI content quality improvement program?

Costs vary based on your content volume and current state. Basic implementation using free tools like our AI Content Audit and manual optimization requires time investment but minimal budget. Enterprise programs with dedicated tracking and professional services typically start at $2,000-5,000 monthly.

Which AI platforms should I prioritize when optimizing content quality for LLMs?

Prioritize based on your audience. ChatGPT has the largest user base and prefers third-party directories. Gemini behaves more like traditional search and favors brand-owned websites. Perplexity prioritizes niche, industry-specific sources. A portfolio approach across all three is most effective.

How is AI content quality for LLMs different from traditional content quality scoring?

Traditional content scoring focuses on keyword density, backlinks, and on-page SEO factors. AI content quality scoring evaluates extractability, semantic relevance, citation potential, and synthesis value. The goal is not just ranking but becoming a trusted source that LLMs cite and recommend.

What is the most common mistake businesses make when trying to improve AI content quality for LLMs?

The most common mistake is treating AI optimization as a separate initiative from content strategy. Effective AI content quality improvement requires integrating the six pillars into your standard content workflow, not treating it as a one-time fix or add-on activity.

Leave a Reply